Collective Minds

Radiology

TYPE

B2B/B2C SaaS

DURATION

4 months

PRIMARY TOOLS

Figma, FigJam, Miro, GitHub, Grain

TEAM

Product Designer, 2 x Customer Success, 4 x Devs, 1 x Product Manager, 1 x Tech Lead

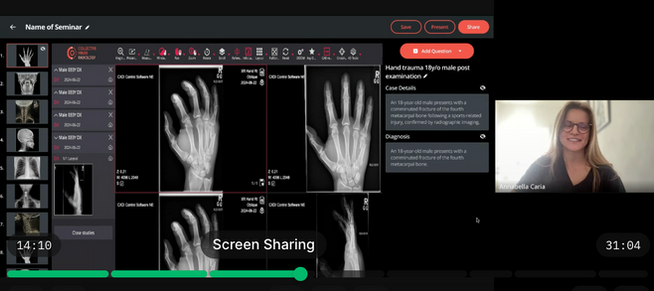

Seminar Feature:

For educators to enhance interactive learning

The goal was to streamline knowledge assessment within training sessions, making education more engaging and efficient while reducing manual workload for educators.

Collective Minds connects radiology professionals through a global, cloud-based platform, built for sharing DICOM cases, collaborating faster, and improving patient outcomes. But when I started looking closely at how people teach with those cases, I noticed the content was there, the expertise was there… but the teaching experience still felt stitched together.

Educators were juggling tools, audiences were losing the thread, and the most valuable moments such as guided reasoning were falling short. Most concerning for the business, we were losing customers. I wanted to understand where DICOM education was breaking down and what 'good' looked like for educators and learners.

Running a great case-based seminar sounds simple, pick some cases, walk the audience through the reasoning, and discuss decisions together. But once I mapped the end-to-end teaching workflow, it became clear why it’s so hard to do well both inside a busy clinical day and on our platform: radiologists are extremely time-poor, seminar setup is fragmented across tools, and 'finding interesting cases' is tedious.

Time is the main constraint

Preparing a session competes with clinical workload, so anything that isn’t fast, reusable, and low-friction tends to get deprioritised.

Teaching is tool-fragmented

A 'teaching session/seminar' often means juggling multiple tools...cases in one place, slides in another, polling somewhere else, and attendance/links in email.

Case-based teaching is valued, but hard to orchestrate live

Educators want learners to reason step-by-step, not jump to the answer, yet it’s easy to accidentally reveal the report/diagnosis too early.

Finding the right case is harder than it should be

Educators don’t want 'any case' they need teachable cases (clear learning objective, the right level of difficulty, interesting). Limited filtering make discovery and reuse tedious on Collective Minds.

Participation needs to be frictionless (and safe)

If joining, answering polls, or viewing content requires extra logins, links, or switching devices, fewer people participate and quieter learners disappear first.

Figuring out what to build

After the research, the next challenge was focus. 'Education' is a big space inside Collective Minds, and a dozen pain points surfaced across educators, residents, and IT teams. To design something shippable and valuable, we needed to align on one primary problem to solve first, the one that would unlock the most impact with the least friction.

I framed a high-level problem statement to anchor the work: Radiology educators struggle to run structured, case-based seminars because preparing and delivering a session requires switching between multiple tools and manually controlling what learners see. In a busy clinical day, this friction makes teaching difficult to repeat.

From insights to priorities

Rather than jumping straight into screens, I ran a set of cross-functional workshops with product, engineering, and clinical stakeholders to translate research into decisions. The goal was to build shared clarity on:

-

what was core vs nice-to-have

-

what was feasible in the short term

-

what would meaningfully move adoption and engagement

What we decided to build first

From the workshops, we aligned on a first release idea that focused on making seminars feasible, not perfect. In practice, that meant prioritising capabilities that reduce prep time, keep teaching in one continuous flow, and enable controlled reveal during case-based discussion.

Hypothesis:

If we introduce a Seminar Feature that integrates our DICOM viewer with presentation tools and interactive polling, we will reduce teaching session prep time by 50% and increase student engagement by 30%, as educators and learners will no longer need to juggle 3-4 separate tools.

Seminar MVP value pillars:

🧩 Turn cases into a seminar, structured sequence, reusable sessions.

🧠 Controlled reveal for case-based teaching, guided reasoning.

🗳️ In-session engagement, built-in polling without switching tools.

🔗 Simple, secure access, easy joining with minimal friction.

With the scope and hypothesis set, I moved into low- to mid-fidelity exploration, mapping the end-to-end seminar flow, then user-testing early prototypes and iterating with educators and residents before committing to high-fidelity UI.

|  |  |

|---|---|---|

|  |  |

|  |  |

|

Now it was time to put the high-fidelity flow through the wringer.

With the mid-fi structure validated, we moved into high-fidelity prototypes to test the details that make or break live teaching: speed of setup, clarity of controls, and whether residents could participate without friction. To keep the team aligned (and to make success measurable) we set clear OKRs for the first release.

Objective and Key Results

Enable radiology educators to create and deliver high-quality teaching sessions more efficiently with an all-in-one, interactive Seminar tool, reducing prep time, increasing learner engagement, and supporting business growth.

⚡ Run case-based DICOM seminars in one tool instead of 3-4.

⏱️ Reduce teaching preparation time from 1h40m to under 20mins.

📈 Increase learner engagement by 30% (poll participation, questions submitted, or active attendance).

🧰 80%+ of seminars are created and delivered without abandoning.

✅ 70%+ of presenters complete the flow without needing support.

From prototype to build

Once the high-fidelity flow tested well against our OKRs, I partnered closely with engineering to translate the prototype into a buildable plan, breaking the experience into clear states, edge cases, and measurable events.

Hand-off, tickets, and build readiness

-

A set of tickets broken down by functional slices (seminar creation, polling, controlled reveal, join flow)

-

Acceptance criteria for each ticket (what 'done' means in UI + behavior)

-

Interaction specs for tricky moments (loading states, errors, permissions, and latency handling)

Soft launch to de-risk the live environment

Because teaching sessions happen in messy real-world conditions (hospital WiFi, heavy cases, mixed devices), we planned a soft launch before a wider rollout:

-

Released to a small set of education heavy accounts / 'champions'

-

Monitored performance and friction (load times, join success, poll participation)

-

Ran short feedback loops with educators and residents

-

Iterated quickly before expanding availability

With build scope locked and rollout planned, I moved into the final prototype and UI details.

Launch and brace for impact

Even with inevitable edge cases surfacing after launch, we kept iterating in tight feedback loops and within the first month the impact was clear, our new Seminar Feature:

🧰 Reduced the educator workflow from an average of 3 tools to 1

⏳ Cut seminar prep time (5 cases + 5 questions) from ~1h40m to ~14 mins

🙋♂️ Boosted learner participation (poll response rates increased by ~26%)

🏥 3 new institutions subscribed

⬆️ 6 existing education customers upgraded their membership

⭐ Shifted from a 'nice-to-have' to a MUST HAVE tool for radiology education